Attackers and defenders are now playing with the same toys: powerful AI models that can find and exploit zero-day vulnerabilities in hours. At Google Cloud Next ’26, Google and Wiz unveiled a set of AI-driven defenses designed to shrink the time between discovery and remediation — and to automate much of the manual work that has left security teams lagging behind. Together, the announcements signal a shift toward agentic, cross-cloud security controls that try to match offensive automation with equally fast and intelligent defenses.

The narrowing window between vulnerability and exploit

AI has accelerated the pace of exploitation. A striking example is Anthropic’s Claude Mythos Preview, released to roughly 40 organizations through Project Glasswing, which can autonomously find and exploit zero-day vulnerabilities. While Anthropic judged such capabilities too dangerous for broad release, the existence of these models makes it likely that comparable tools will proliferate. That raises the stakes: organizations can no longer rely on slow, manual triage and one-off detection rules when attackers can iterate and deploy exploits in hours.

Google’s agentic answer

Google Cloud introduced three new AI agents (all in preview) into Google Security Operations, its cloud-native SIEM and SOAR platform. These build on an existing Triage and Investigation agent that Google says processed more than 5 million alerts over the past year and reduced a typical 30-minute manual analysis to roughly 60 seconds. The newly announced agents are:

- Threat Hunting agent

- Proactively searches for novel attack patterns and adversary behaviors that bypass rule-based detections.

- Looks for subtle, emergent indicators that automated rule sets may miss.

- Detection Engineering agent

- Identifies gaps in an organization’s detection coverage.

- Automatically generates new detections for realistic threat scenarios, converting detection engineering from a highly manual craft into a more automated pipeline.

- Third-Party Context agent

- Enriches security workflows with external contextual data to help tie alerts and detections to broader threat intelligence and vendor signals.

Taken together with the Triage agent, these four agents aim to cover the majority of the security operations workflow: triage noisy alert volumes, hunt for what rules miss, close detection gaps, and enrich decisions with outside intelligence.

Why Google is betting on agentic defense

Google’s strategy — emphasized again at Next ’26 — is that defense must be as agentic as offense, and it must operate across every cloud. That’s why Google is positioning these agents inside its SIEM/SOAR stack and making the defensive tooling available on its AI platform (the service formerly known as Vertex AI, now the Google Gemini Agent Platform) for defensive use. Google isn’t using the Anthropic model itself for product launches today, but it is part of the broader ecosystem of agent-capable models that both attackers and defenders will have access to.

Wiz: extending AI-native security across clouds and dev workflows

Wiz, now part of Google Cloud, used Next ’26 to roll out a set of features and integrations that reflect the realities of AI-native development and multi-cloud footprints. Rather than introducing an entirely new product line, Wiz expanded support across third-party clouds and developer tooling, and added automation intended to catch problems earlier in the development lifecycle.

Key moves from Wiz include:

- Extending its AI-Application Protection Platform (AI-APP) integrations to Databricks, AWS Agentcore, the Gemini Enterprise Agent Platform, Microsoft Azure Copilot Studio, and Salesforce Agentforce.

- Embedding Wiz scanning into vibe-coding services such as Lovable, surfacing vulnerabilities and misconfigurations before code ships; Wiz’s 2025 research found that 20 percent of vibe-coded apps contain security risks.

- Adding inline AI security hooks in IDEs and agent workflows to help developers catch security issues before code is committed.

- Introducing agent-based remediation that can generate targeted fixes as pull requests, accelerating the path from detection to patch.

- Launching a dynamic AI-BOM (AI Bill of Materials) to inventory every AI framework, model, and IDE extension across an environment — a crucial capability for combating shadow AI and unauthorized use of models like Claude Code.

The practical implications for security teams

These announcements matter for several reasons. First, automation that reduces human triage time from half an hour to roughly a minute can free analysts to focus on higher-value investigations. Second, automated detection engineering and agentic hunting reduce the reliance on handcrafted rules that quickly become brittle in the face of novel attack techniques. Third, integrating security earlier in the developer workflow — in IDEs and vibe-coding platforms — helps prevent vulnerabilities from ever reaching production, a necessary shift given how fast AI-assisted coding is accelerating deployment cycles.

Limits and realistic expectations

None of these tools are a silver bullet. Models that can autonomously discover vulnerabilities also create dual-use risks, and automated detections will need continuous validation to avoid new classes of false positives or blind spots. Organizations will still need mature processes, governance, and skilled teams to interpret and operationalize agent outputs. The goal of these products is to change the balance of effort — to make defensive work faster, more proactive, and more tightly integrated into development and operations.

Looking ahead

The cat-and-mouse game between attackers and defenders is evolving into a contest between autonomous agents. Google Cloud’s agent strategy and Wiz’s cross-cloud, developer-focused integrations are early signs of how enterprise security might adapt: faster detection pipelines, proactive hunts, automated remediation, and a firmer grip on shadow AI. For security leaders, the next challenge is governance: defining how much agency to give automated defenders, how to validate their findings, and how to ensure they operate safely across hybrid and multi-cloud environments.

109 Fake GitHub Repositories Used to Deliver SmartLoader and StealC Malware

A large-scale campaign recently uncovered shows how attackers abused the trust developers…

Lovable AI App Builder Reportedly Exposes Thousands of Projects’ Source Code and Customer Data

A critical Broken Object Level Authorization (BOLA) vulnerability in Lovable, an AI-powered…

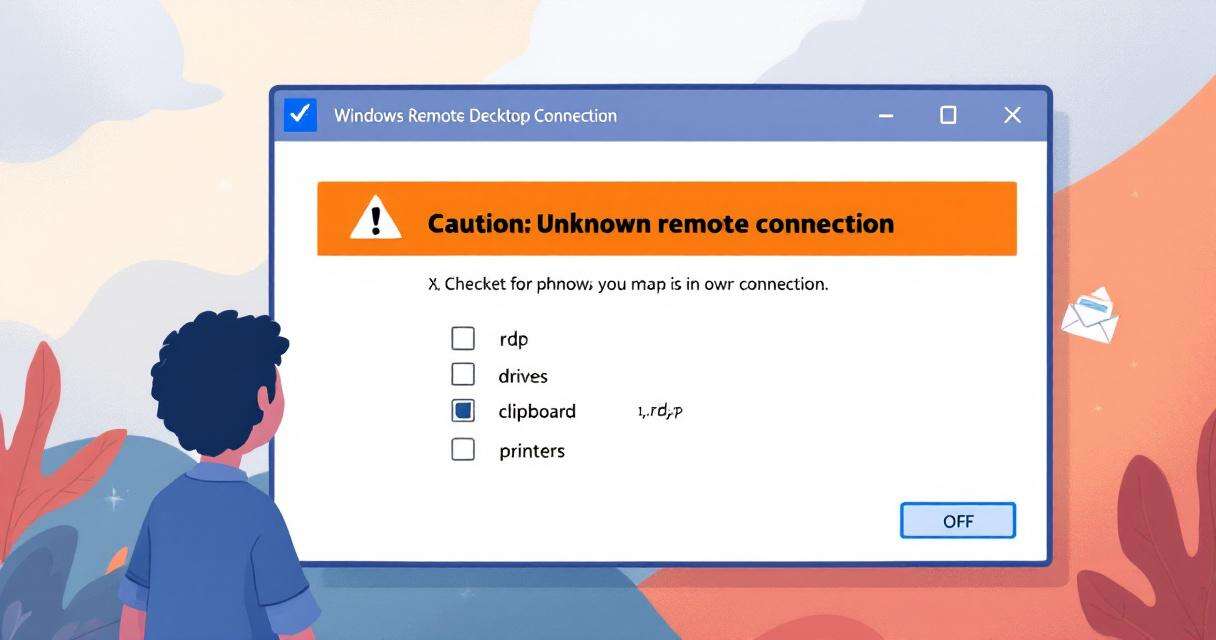

New RDP Alert After April 2026 Security Update Warns of Unknown Connections

Microsoft’s April 2026 Patch Tuesday introduced a small-looking but important change to…

Recently Leaked Windows Zero-Days Now Being Actively Exploited: What You Need to Know

Threat actors have begun abusing three recently disclosed Windows vulnerabilities to escalate…