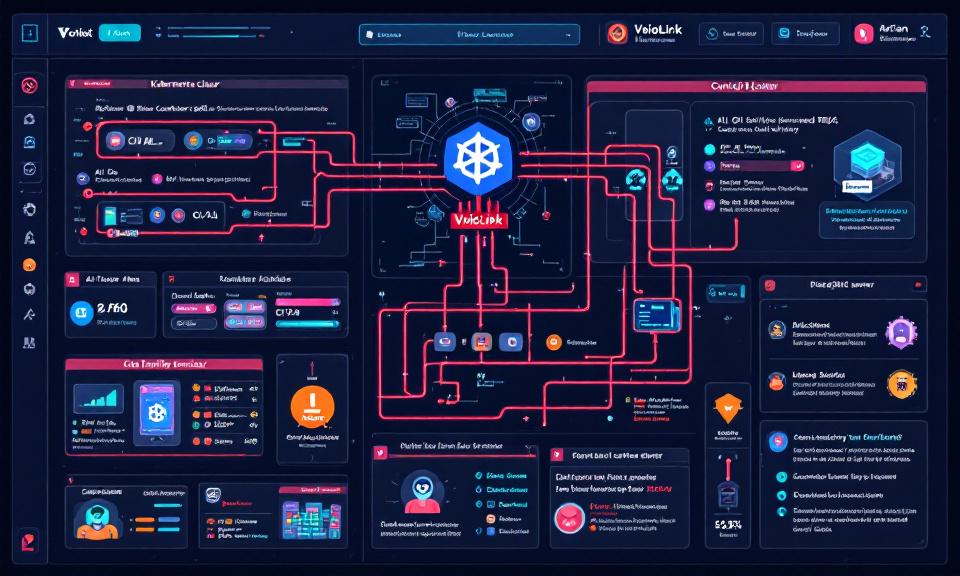

Title: VoidLink Malware Framework: Key Points on How It Targets Kubernetes and AI Workloads

Overview

- VoidLink is a modular malware framework observed targeting cloud-native environments, with emphasis on Kubernetes clusters and AI infrastructure.

- Goal: persistence, lateral movement, data exfiltration, and abuse of compute (e.g., model theft, crypto-mining, or training/serving misuse).

- Modularity enables plugins for container escape, kubeconfig harvesting, and targeted abuse of GPU resources.

Threat model

- Targets: misconfigured clusters, exposed control planes, CI/CD pipelines, model registries, and nodes with elevated privileges.

- Adversary profile: financially motivated groups and opportunistic attackers seeking compute, data, or access for follow-on operations.

- Attack surface: public APIs, leaked credentials, vulnerable container images, unsecured model artifacts, and overloaded or under-monitored GPU nodes.

Primary attack vectors (high-level)

- Compromised credentials: stolen kubeconfigs, cloud keys, or CI tokens to access clusters.

- Supply chain: tampered images or compromised registries that deliver malicious containers.

- Lateral deployment: creation of DaemonSets, Jobs, CronJobs, or sidecar containers to persist and spread.

- Exploits and misconfigurations: leveraging exposed dashboards, insecure kubelet endpoints, or permissive RBAC.

- AI-specific abuse: loading of backdoored model artifacts, unauthorized dataset exfiltration, or illicit use of GPUs.

Indicators of compromise (defensive, non-actionable)

- Sudden creation of privileged DaemonSets, ClusterRoles, or namespaces without change control.

- Unusual outbound traffic from nodes that normally have limited egress (especially to unfamiliar IP ranges or cloud buckets).

- Abnormal GPU utilization patterns on nodes not scheduled for heavy AI workloads.

- Unexpected processes or binaries inside containers, or containers running images not in approved registries.

- Anomalies in CI/CD pipelines: unknown commits, new deploy keys, or unexpected image pushes.

- New service accounts with broad permissions or unexpected kubeconfig downloads.

Impact on AI workloads

- Model theft: exfiltration of model weights or proprietary datasets.

- Model poisoning/backdoors: insertion of hidden triggers into training pipelines or model artifacts.

- Service disruption: overload or sabotage of model-serving endpoints, affecting availability and trust.

- Resource theft: illicit use of GPUs or clusters to run unauthorized training/mining tasks, increasing cost and degrading performance.

Detection and monitoring (recommendations)

- Centralized logging and audit collection: enable and retain Kubernetes audit logs and cloud provider logs; monitor for sensitive API calls.

- Runtime behavioral monitoring: deploy host/container runtime detection (Falco, eBPF-based tools, EDR) to flag unusual process or network activity.

- GPU and resource telemetry: baseline normal GPU usage and alert on anomalies or spikes linked to unauthorized pods.

- Image and registry monitoring: enforce image provenance, scan images for malware, and watch for unapproved registries or tag changes.

- CI/CD observability: monitor pipeline changes, credential usage, and image signing/attestation failures.

Mitigation and hardening (practical controls)

- Least privilege: tighten RBAC, grant minimal permissions to service accounts, and rotate credentials frequently.

- Network segmentation: apply Kubernetes NetworkPolicies and cloud VPC controls to limit pod-to-pod and pod-to-internet access.

- Pod security posture: enforce PodSecurity admission (restricted profiles), disallow hostPath, privileged containers, and prevent running containers as root.

- Protect secrets: use secrets management (KMS, HashiCorp Vault), avoid storing credentials in plaintext, and restrict secret access to necessary workloads.

- Image hygiene: use signed images, immutable tags, vulnerability scanning, and a curated internal registry for production.

- Protect control plane: restrict API server access, enable API authentication/authorization, and restrict kubelet access.

- Limit external exposure: minimize public endpoints for model registries and internal dashboards; require strong auth and MFA.

Incident response and recovery (high-level)

- Isolation: cordon and isolate suspected nodes; restrict network egress for affected namespaces.

- Forensics: preserve logs, container images, and node snapshots for analysis; prioritize evidence collection over destructive remediation.

- Credential revocation: rotate service account tokens, API keys, and cloud credentials that may be compromised.

- Rebuild over repair: where trust is uncertain, redeploy workloads from verified images and known-good CI artifacts rather than repairing compromised containers.

- Post-incident hardening: apply lessons learned, tighten controls, and run tabletop exercises focused on AI/ML pipeline attacks.

Operational recommendations for AI teams

- Separate environments: isolate model training and serving clusters from general-purpose workloads and from public-facing services.

- Least-privilege model access: restrict which services and users can pull, modify, or deploy models and datasets.

- Model provenance and integrity: use artifact signing and checksums for datasets and model binaries; verify before deployment.

- Cost and telemetry controls: enforce budgets and alerts for unexpected resource usage (GPU, storage, egress).

- Continuous threat modeling: include AI-specific abuse cases in threat assessments and red-team exercises.

Governance and policy

- Define clear ownership of ML infrastructure, data, and model registries.

- Require security gates in CI/CD pipelines (scanning, signing, policy checks) before model or image promotion.

- Establish SLAs and playbooks for detecting and responding to AI-targeted compromises.

Conclusion — quick takeaways

- VoidLink-style frameworks highlight the convergence of cloud-native and AI risks: attackers increasingly target orchestration and compute, not just data.

- Defense must be layered: strong identity controls, image/CI security, runtime monitoring, and AI-specific governance together reduce risk.

- Assume compromise for transient workloads: design for rapid detection, containment, and rebuild of affected components.

You Might Also Like

When Local Trust Breaks: The OpenClaw 0-Click Vulnerability and What Developers Must Do Now

The speed at which developer-facing AI agents have been adopted is staggering…

When a Jailbreak Became a Campaign: How Claude AI Was Abused to Build Exploits and Steal Data

In late 2025 a persistent attacker turned a conversational AI into a…

Stryker Confirms Massive Wiper Strike — Thousands of Devices Erased in Alleged Iran-Linked Operation

Stryker, the global medical technology company, confirmed on March 11, 2026, that…

What the Marquis Breach Teaches Us About Vendor Risk and Ransomware Preparedness

Marquis, a Texas-based provider of digital marketing, CRM and analytics services for…