A series of drone strikes against Amazon Web Services facilities in the Middle East triggered one of the most significant regional outages in AWS history, disrupting services across the ME-CENTRAL-1 (UAE) region and causing collateral damage in the nearby ME-SOUTH-1 (Bahrain) region. The incident began in the early hours of March 1, 2026, and left many customers scrambling to fail over workloads and recover data for days.

Incident timeline and immediate effects

The first event occurred at approximately 4:30 AM PST on March 1, when mec1-az2 in the UAE was struck by an object AWS initially described in status updates as causing a “localized power issue” with “sparks and fire” reported inside the facility. Local emergency crews shut off power to the site and isolated its generators to contain the blaze. Over the next day, AWS updated its communications: by March 2 the company confirmed that two UAE availability zones (mec1-az2 and, later, mec1-az3) had been directly struck, and that a separate AWS facility in the Bahrain region (ME-SOUTH-1) suffered damage from a nearby strike. AWS attributed the attacks to the ongoing conflict in the region.

With two of three AZs in ME-CENTRAL-1 impaired simultaneously, regional redundancy guarantees were overwhelmed. Amazon S3, DynamoDB, EC2 and other foundational services experienced high failure rates and throttling as the outage cascaded upward through the service stack.

Services affected (preserved table)

At peak disruption AWS reported that 109 services were touched in ME-CENTRAL-1: 25 fully disrupted, 34 degraded, and 50 impacted. The core service impacts reported include the following:

| Service | Status | Impact |

|---|---|---|

| Amazon S3 | Disrupted | High PUT/GET/LIST failure rates |

| Amazon DynamoDB | Disrupted | Elevated error rates, write/read failures |

| Amazon EC2 | Disrupted | Instance launches throttled region-wide |

| AWS Lambda | Disrupted | Heavily dependent on S3/DynamoDB recovery |

| Amazon Kinesis | Disrupted | Cascaded from foundational service failure |

| Amazon CloudWatch | Disrupted | Monitoring degraded |

| Amazon RDS | Disrupted | Database availability impaired |

| AWS Management Console | Disrupted | Partial operational; page errors persisted |

Broader customer impact

The outage extended beyond infrastructure metrics to real-world services in the UAE. Consumer-facing platforms and payment providers relying on the affected region reported service interruptions — for example, ride-hailing and delivery services and several regional payment processors reported degraded or suspended functionality tied to the AWS outage. The event highlighted how concentrated cloud dependencies in a single region can rapidly propagate to customer-facing applications and regional economies.

AWS response and remediation steps

AWS ran parallel recovery tracks: physical repairs at damaged data centers and software-side mitigations to restore partial functionality before full infrastructure restoration. For S3, AWS deployed updates intended to allow some operations to continue under degraded conditions; by March 3 at 8:14 AM PST, AWS reported improvement in S3 PUT and LIST operations for newly written objects, though GET access for pre-existing objects still depended on physical restoration. EC2 remained subject to throttling and DynamoDB error rates continued to be elevated while teams worked to remediate affected tables.

AWS repeatedly advised customers to execute disaster recovery playbooks: fail over to other regions, restore from remote backups, and redirect traffic away from ME-CENTRAL-1. Recommended alternatives included regions in the United States, Europe, and Asia Pacific depending on latency and data residency requirements.

Key takeaways and recommendations

- Geographic risk matters: Hosting critical workloads in regions exposed to active conflict carries physical-risk vectors not present in peacetime environments.

- Design for multi-region resilience: Active-active architectures and regular cross-region backups reduce single-region blast radius.

- Test recovery procedures: Organizations should rehearse failover and restore operations so they can move workloads quickly when an outage occurs.

- Monitor provider advisories: Follow provider status updates and apply recommended mitigations immediately when a regional incident unfolds.

Conclusion

The March outage in AWS’s Middle East region — triggered by drone strikes and compounded by secondary damage to infrastructure — underlined the limits of regional redundancy in the face of simultaneous physical failures. For enterprises operating in geopolitically sensitive areas, the event serves as a concrete case study for strengthening multi-region resilience, testing recovery plans, and re-evaluating the physical risk profile of cloud deployments.

Face-Off: Windows PowerShell vs PowerShell Core — The Real-World Transition

PowerShell has come a long way since its inception, becoming an essential…

Do not trust any public VPN service, Create your own Secure SOCKS5 Proxy for just $5 – Be Free 🙂

If someone ask me to recommend one good proxy service, I would…

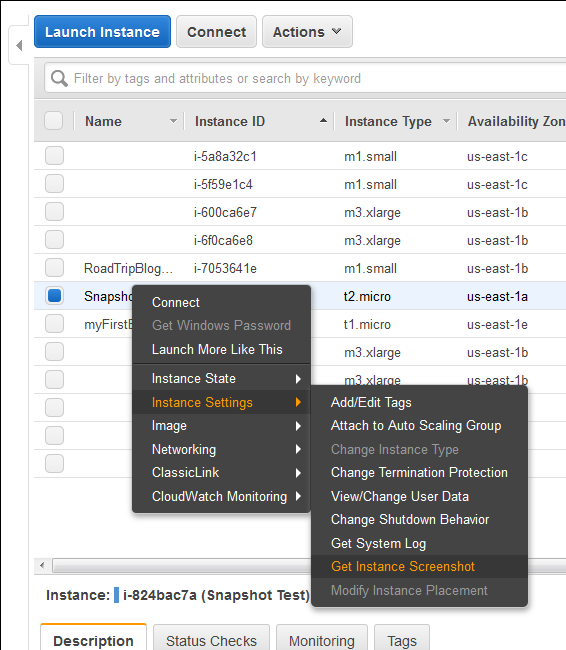

New Feature : EC2 Instance Console Screenshot for Boot Troubleshooting

Finally a great feature add to AWS EC2 instances (HVM virtualization only),…

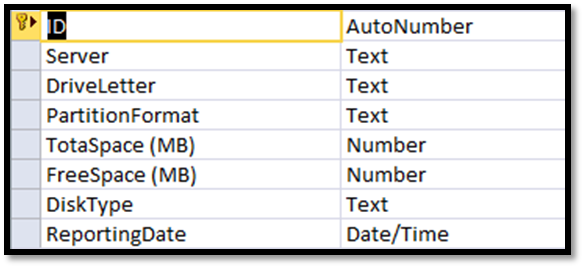

Setup your own Monitoring – Disk Space Utilization Monitoring tool for free

It is always recommended to keep tracking of the disk space utilization…